When editing processes, you can use the following algorithms to classify image objects:

You will already have a little familiarity with class descriptions and hierarchies from the basic tutorial, where you manually assigned classes to the image objects derived from segmentation..

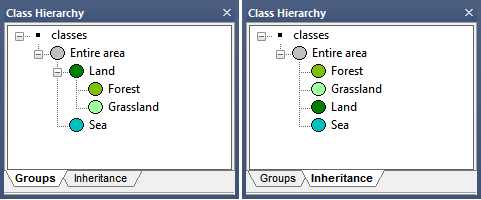

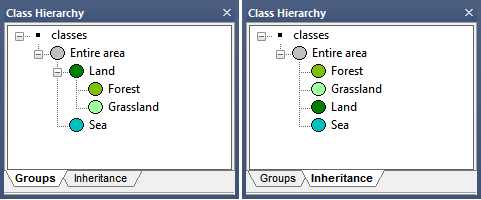

There are two views in the Class Hierarchy window, which can be selected by clicking the tabs at the bottom of the window:

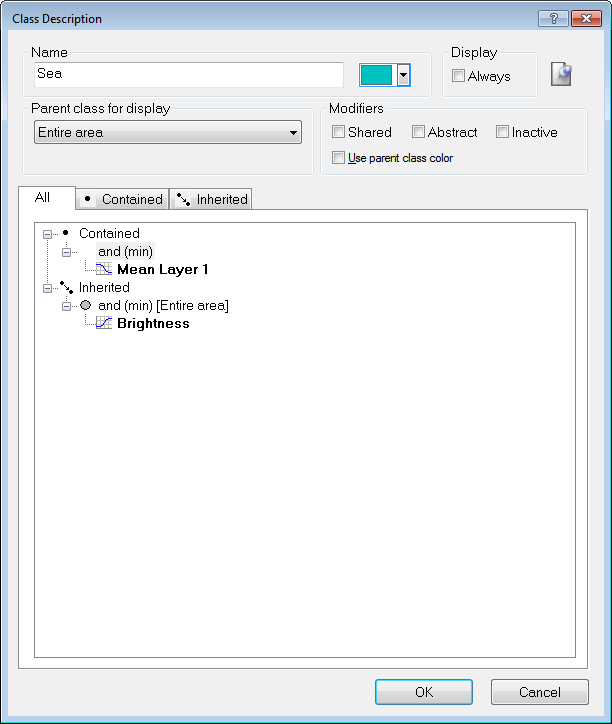

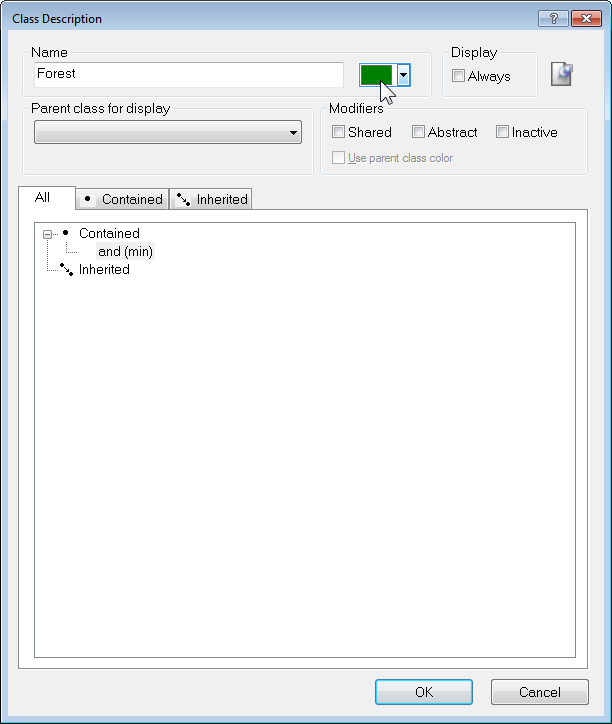

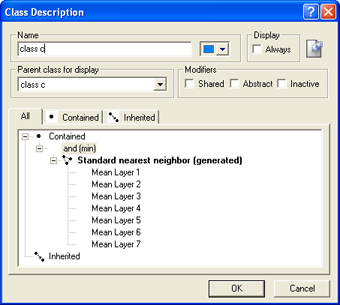

Double-clicking a class in either view will launch the Class Description dialog box. The Class Description box allows you to change the name of the class and the color assigned to it, as well as an option to insert a comment. Additional features are:

There are two ways of creating and defining classes; directly in the Class Hierarchy window, or from processes in the Process Tree window.

To create a new class, right-click in the Class Hierarchy window and select Insert Class. The Class Description dialog box will appear.

Enter a name for your class in the Name field and select a color of your choice. Press OK and your new class will be listed in the Class Hierarchy window.

Many algorithms allow the creation of a new class. When the Class Filter parameter is listed under Parameters, clicking on the value will display the Edit Classification Filter dialog box. You can then right-click on this window, select Insert Class, then create a new class using the same method outlined in the preceding section.

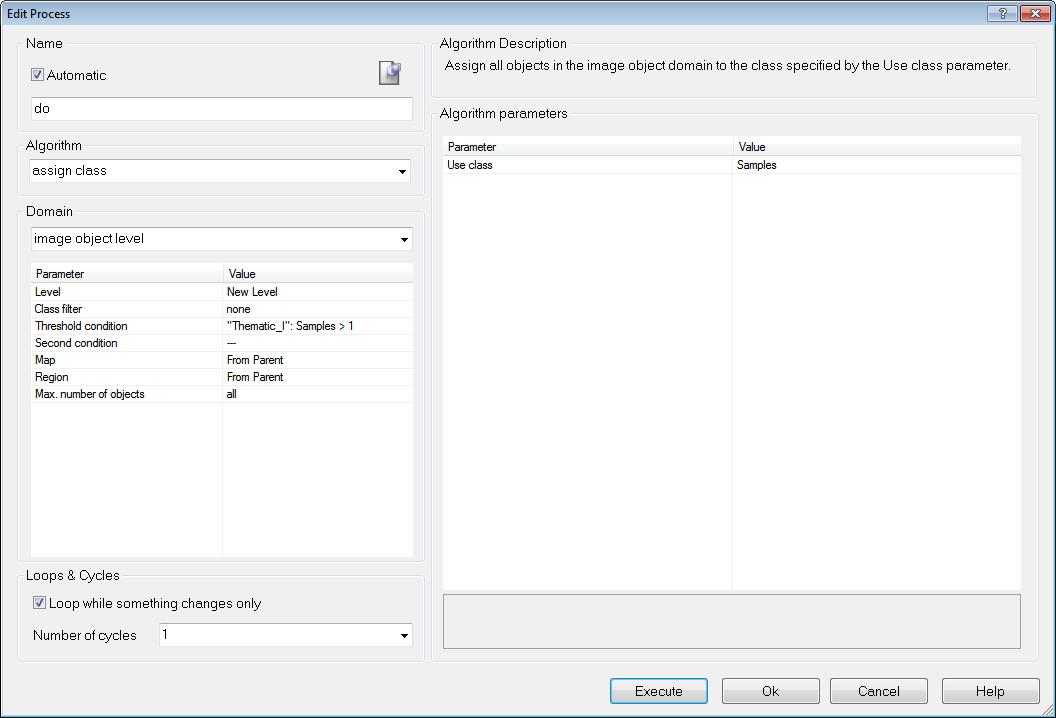

The Assign Class algorithm is a simple classification algorithm, which allows you to assign a class based on a condition (for example a brightness range):

In the Algorithm Parameters pane, opposite Use Class, select a class you have previously created, or enter a new name to create a new one (this will launch the Class Description dialog box)

You can edit the class description to handle the features describing a certain class and the logic by which these features are combined.

A new or an empty class description contains the 'and (min)' operator by default.

Although logical terms (operators) and similarities can be inserted into a class as they are, the nearest neighbor and the membership functions require further definition.

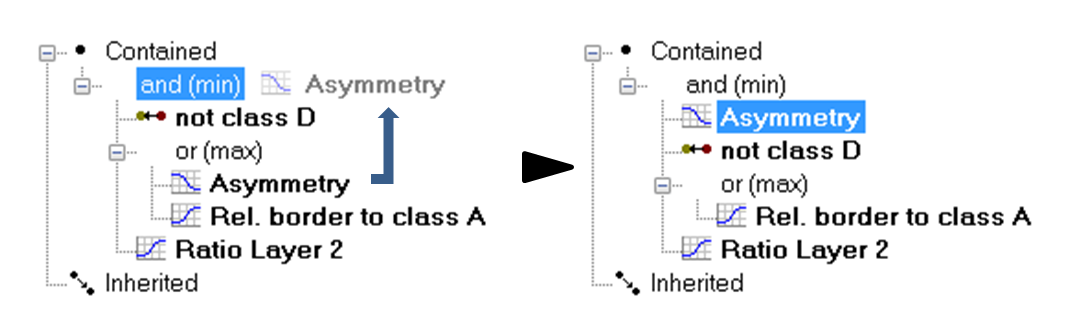

To move an expression, drag it to the desired location.

To edit an expression, double-click the expression or right-click it and choose Edit Expression from the context menu. Depending on the type of expression, one of the following dialog boxes opens:

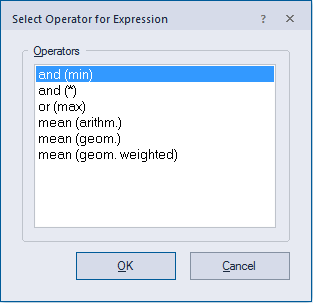

The following expression operators are available in eCognition if you select Edit Expression or Insert expression > Logical terms:

Example: Consider four membership values of 1 each and one of 0. The 'and'-operator yields the minimum value, i.e., 0, whereas the 'or'-operator yields the maximum value, i.e., 1. The arithmetic mean yields the average value, in this case a membership value of 0.8.

See also Fuzzy Classification using Operators and Adding Weightings to Membership Functions.

Image objects retain the status 'undefined' when they do not meet the criteria of a feature. If you want to use these image objects anyway, for example for further processing, you must put them in a defined state. The function Evaluate Undefined assigns the value 0 for a specified feature.

To delete an expression, either:

The Nearest Neighbor classifier is recommended when you need to make use of a complex combination of object features, or your image analysis approach has to follow a set of defined sample image objects. The principle is simple – first, the software needs samples that are typical representatives for each class. Based on these samples, the algorithm searches for the closest sample image object in the feature space of each image object. If an image object's closest sample object belongs to a certain class, the image object will be assigned to it.

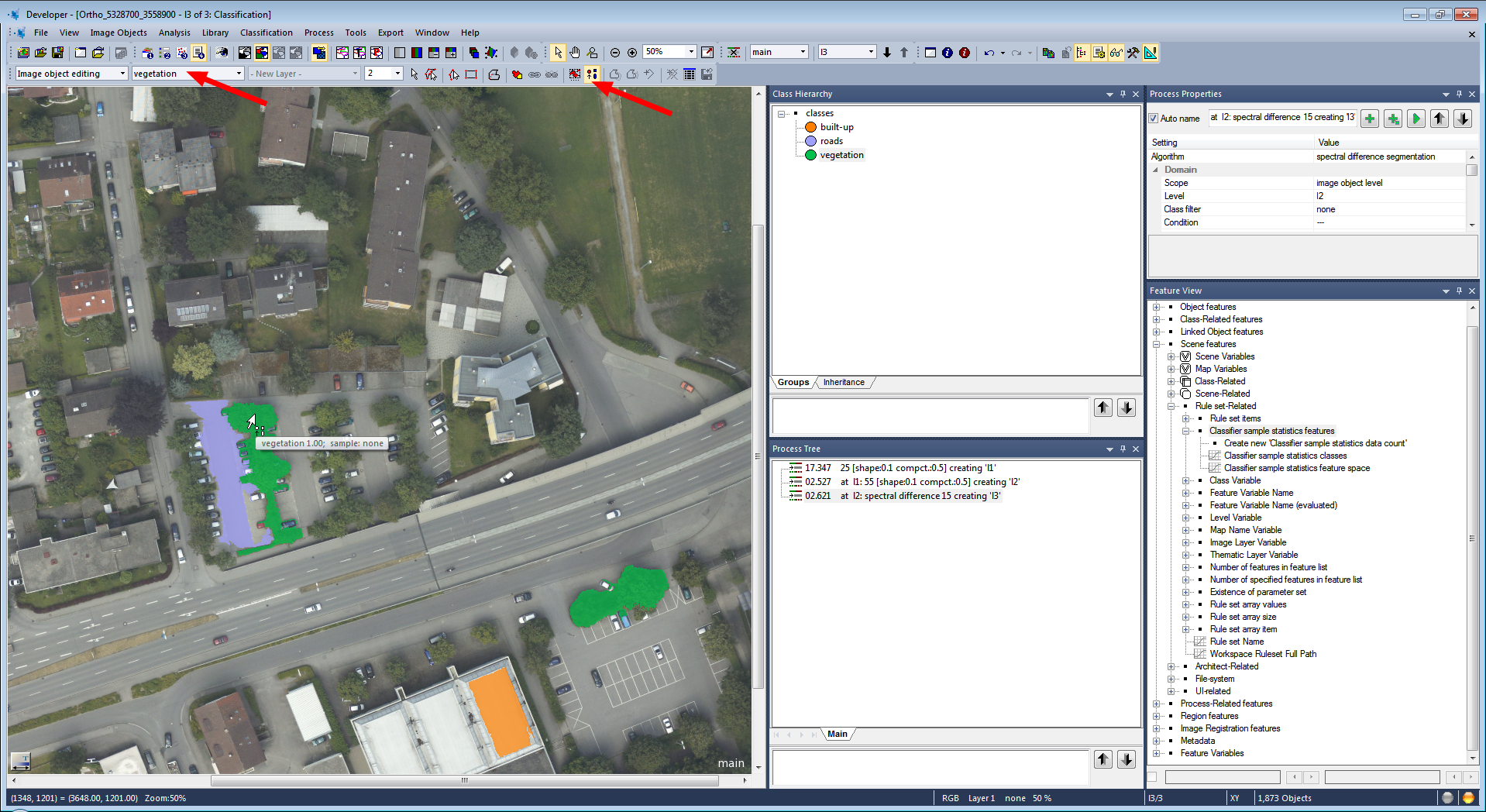

For advanced users, the Feature Space Optimization function offers a method to mathematically calculate the best combination of features in the feature space. To classify image objects using the Nearest Neighbor classifier, follow the recommended workflow:

Defining Sample Image Objects For the Nearest Neighbor classification, you need sample image objects. These are image objects that you consider a significant representative of a certain class and feature. By doing this, you train the Nearest Neighbor classification algorithm to differentiate between classes. The more samples you select, the more consistent the classification. You can define a sample image object manually by clicking an image object in the map view.

You can also load a Test and Training Area (TTA) mask, which contains previously manually selected sample image objects, or load a shapefile, which contains information about image objects.

Comments can be added to expressions using the same principle described in Adding Comments to Classes.

The Edit Classification Filter is available from the Edit Process dialog for appropriate algorithms (e.g. Algorithm classification) and can be launched from the Class Filter parameter.

The buttons at the top of the dialog allow you to:

The Use Array drop-down box lets you filter classes based on arrays.

The Assign Class algorithm is the most simple classification algorithm. It uses a condition to determine whether an image object belongs to a class or not.

The Classification algorithm uses class descriptions to classify image objects. It evaluates the class description and determines whether an image object can be a member of a class.

Classes without a class description are assumed to have a membership value of one. You can use this algorithm if you want to apply fuzzy logic to membership functions, or if you have combined conditions in a class description.

Based on the calculated membership value, information about the three best-fitting classes is stored in the image object classification window; therefore, you can see into what other classes this image object would fit and possibly fine-tune your settings. To apply this function:

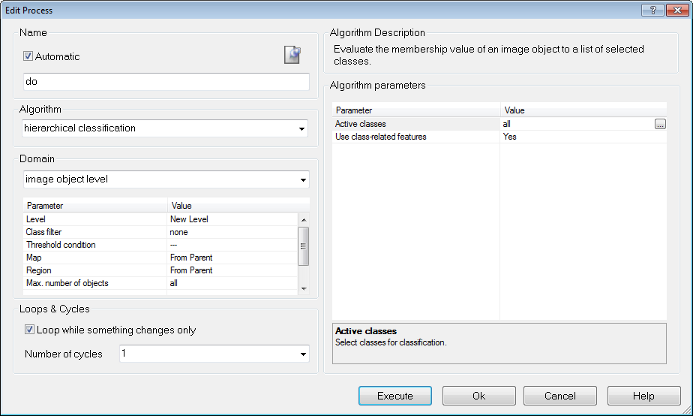

The Hierarchical Classification algorithm is used to apply complex class hierarchies to image object levels. It is backwards compatible with eCognition 4 and older class hierarchies and can open them without major changes.

The algorithm can be applied to an entire set of hierarchically arranged classes. It applies a predefined logic to activate and deactivate classes based on the following rules:

If the membership value of an image object is lower than the pre-defined minimum membership value, the image object remains unclassified. If two or more class descriptions share the highest membership value, the assignment of an object to one of these classes is random.

The three best classes are stored as the image object classification result. Class-related features are considered only if explicitly enabled by the corresponding parameter.

Advanced classification algorithms are designed to perform specific classification tasks. All advanced classification settings allow you to define the same classification settings as the classification algorithm; in addition, algorithm-specific settings must be set. The following algorithms are available:

A threshold condition determines whether an image object matches a condition or not. Typically, you use thresholds in class descriptions if classes can be clearly separated by a feature.

It is possible to assign image objects to a class based on only one condition; however, the advantage of using class descriptions lies in combining several conditions. The concept of threshold conditions is also available for process-based classification; in this case, the threshold condition is part of the domain and can be added to most algorithms. This limits the execution of the respective algorithm to only those objects that fulfill this condition. To use a threshold:

The class description contains class definitions such as name and color, along with several other settings. In addition it can hold expressions that describe the requirements an image object must meet to be a member of this class when class description-based classification is used. There are two types of expressions:

You can use logical operators to combine the expressions and these expressions can be nested to produce complex logical expressions.

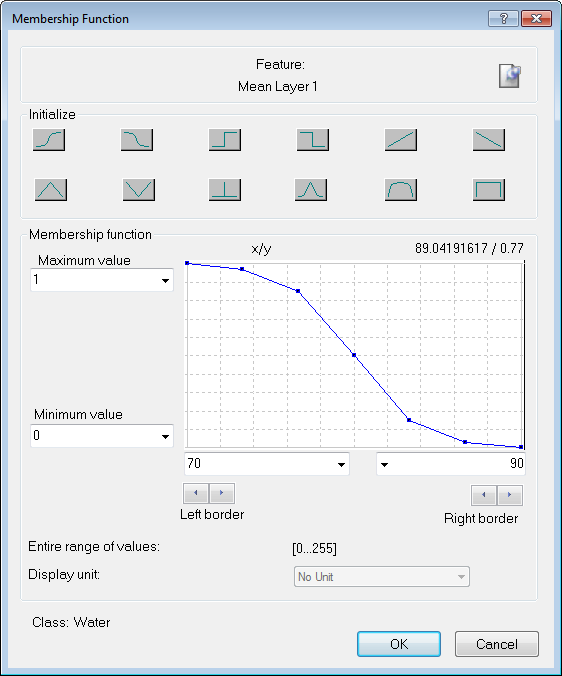

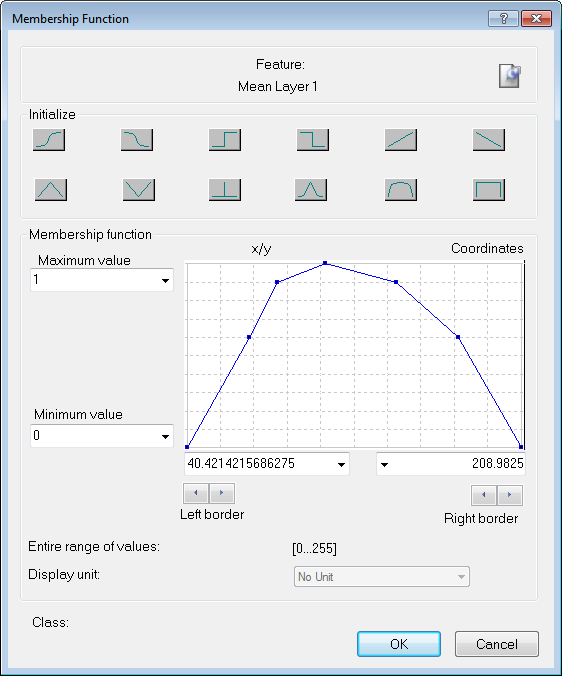

Membership functions allow you to define the relationship between feature values and the degree of membership to a class using fuzzy logic.

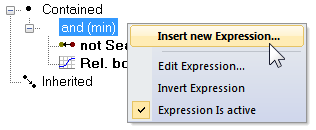

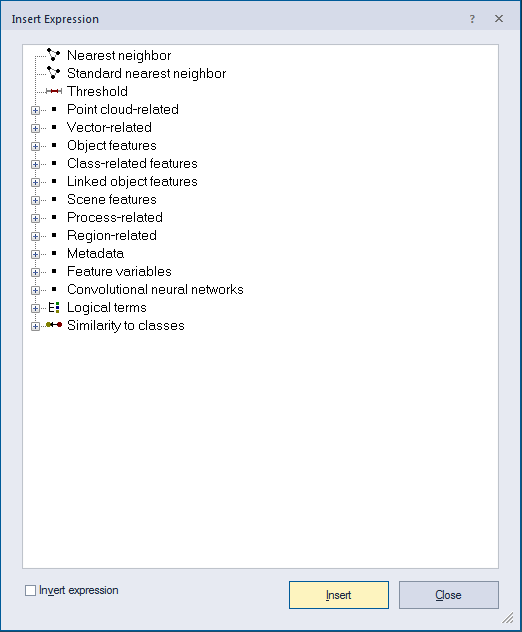

Double-clicking on a class in the Class Hierarchy window launches the Class Description dialog box. To open the Membership Function dialog, right-click on an expression – the default expression in an empty box is 'and (min)' – to insert a new one, select Insert New Expression. You can edit an existing one by right-clicking and selecting Edit Expression.

For assigning membership, the following predefined functions are available:

| Button | Function Form |

|---|---|

|

Larger than |

|

Smaller than |

|

Larger than (Boolean, crisp) |

|

Smaller than (Boolean, crisp) |

|

Larger than (linear) |

|

Smaller than (linear) |

|

Linear range (triangle) |

|

Linear range (triangle inverted) |

|

Singleton (exactly one value) |

|

Approximate Gaussian |

|

About range |

|

Full range |

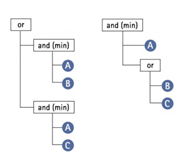

After the manual or automatic definition of membership functions, fuzzy logic can be applied to combine these fuzzified features with operators. Generally, fuzzy rules set certain conditions which result in a membership value to a class. If the condition only depends on one feature, no logic operators would be necessary to model it. However, there are usually multidimensional dependencies in the feature space and you may have to model a logic combination of features to represent this condition. This combination is performed with fuzzy logic. Fuzzy logic allows the modelling several concepts of 'and' and 'or'.

The most common and simplest combination is the realization of 'and' by the minimum operator and 'or' by the maximum operator. When the maximum operator 'or (max)' is used, the membership of the output equals the maximum fulfilment of the single statements. The maximum operator corresponds to the minimum operator 'and (min)' which equals the minimum fulfilment of the single statements. This means that out of a number of conditions combined by the maximum operator, the highest membership value is returned. If the minimum operator is used, the condition that produces the lowest value determines the return value. The other operators have the main difference that the values of all contained conditions contribute to the output, whereas for minimum and maximum only one statement determines the output.

When creating a new class, its conditions are combined with the minimum operator 'and (min)' by default. The default operator can be changed and additional operators can be inserted to build complex class descriptions, if necessary. For given input values the membership degree of the condition and therefore of the output will decrease with the following sequence:

See also Operator for Expression.

To change the default operator, right-click the operator and select 'Edit Expression.'

You can now choose from the available operators. To insert additional operators, open the 'Insert Expression' menu and select an operator under 'Logical Terms.' To insert an inverted operator, activate the 'Invert Expression' box in the same dialog; this negates the operator (returns 1 – fuzzy value): 'not and (min).' To combine classes with the newly inserted operators, click and drag the respective classes onto the operator.

A hierarchy of logical operator expressions can be combined to form well-structured class descriptions. Thereby, class descriptions can be designed very flexibly on the one hand, and very specifically on the other. An operator can combine either expressions only, or expressions and additional operators - again linking expressions.

An example of the flexibility of the operators is given in the image below. Both constellations represent the same conditions to be met in order to classify an object.

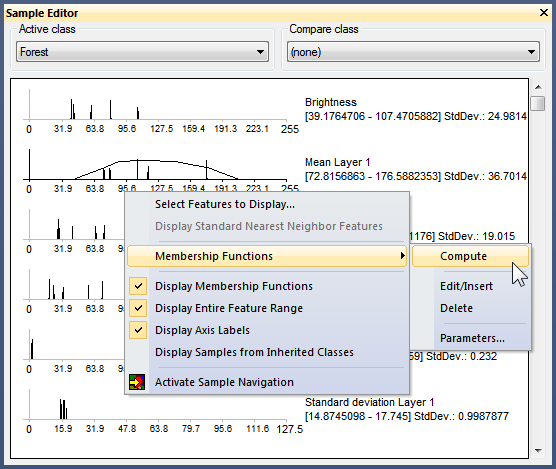

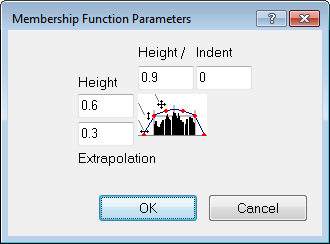

In some cases, especially when classes can be clearly distinguished, it is convenient to automatically generate membership functions. This can be done within the Sample Editor window (for more details on this function, see Working with the Sample Editor).

To generate a membership function, right-click the respective feature in the Sample Editor window and select Membership Functions > Compute.

Membership functions can also be inserted and defined manually in the Sample Editor window. To do this, right-click a feature and select Membership Functions > Edit/Insert, which opens the Membership Function dialog box. This also allows you to edit an automatically generated function.

To delete a generated membership function, select Membership Functions > Delete. You can switch the display of generated membership functions on or off by right-clicking in the Sample Editor window and activating or deactivating Display Membership Functions.

You can edit parameters of a membership function computed from sample objects.

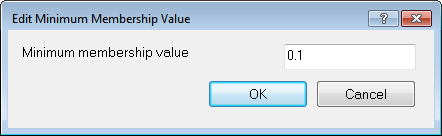

The minimum membership value defines the value an image object must reach to be considered a member of the class.

If the membership value of an image object is lower than a predefined minimum, the image object remains unclassified. If two or more class descriptions share the highest membership value, the assignment of an object to one of these classes is random.

To change the default value of 0.1, open the Edit Minimum Membership Value dialog box by selecting Classification > Advanced Settings > Edit Minimum Membership Value from the main menu.

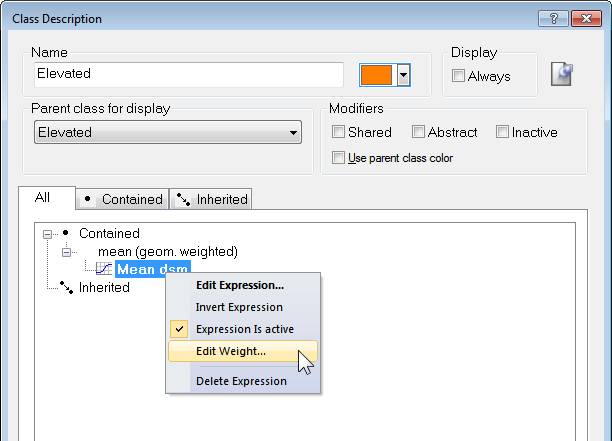

The following expressions support weighting:

Weighting can be added to any expression by right-clicking on it and selecting Edit Weight. The weighting can be a positive number, or a scene or object variable. Information on weighting is also displayed in the Class Evaluation tab in the Image Object Information window.

Weights are integrated into the class evaluation value using the following formulas (where w = weight and m = membership value):

Similarities work like the inheritance of class descriptions. Basically, adding a similarity to a class description is equivalent to inheriting from this class. However, since similarities are part of the class description, they can be used with much more flexibility than an inherited feature. This is particularly obvious when they are combined by logical terms.

A very useful method is the application of inverted similarities as a sort of negative inheritance: consider a class 'bright' if it is defined by high layer mean values. You can define a class 'dark' by inserting a similarity feature to bright and inverting it, thus yielding the meaning dark is not bright.

It is important to notice that this formulation of 'dark is not bright' refers to similarities and not to classification. An object with a membership value of 0.25 to the class 'bright' would be correctly classified as' bright'. If in the next cycle a new class dark is added containing an inverted similarity to bright the same object would be classified as 'dark', since the inverted similarity produces a membership value of 0.75. If you want to specify that 'dark' is everything which is not classified as 'bright' you should use the feature Classified As.

Similarities are inserted into the class description like any other expression.

The combination of fuzzy logic and class descriptions is a powerful classification tool. However, it has some major drawbacks:

There are two ways to avoid these problems – stagger several process containing the required conditions using the Parent Process Object concept (PPO) or use evaluation classes. Evaluation classes are as crucial for efficient development of auto-adaptive rule sets as variables and temporary classes.

To clarify, evaluation classes are not a specific feature and are created in exactly the same way as 'normal' classes. The idea is that evaluation classes will not appear in the classification result – they are better considered as customized features than real classes.

Like temporary classes, we suggest you prefix their names with '_Eval' and label them all with the same color, to distinguish them from other classes.

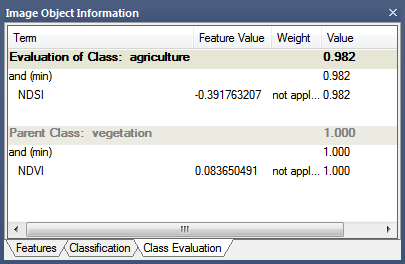

To optimize the thresholds for evaluation classes, click on the Class Evaluation tab in the Image Object Information window. Clicking on an object returns all of its defined values, allowing you to adjust them as necessary.

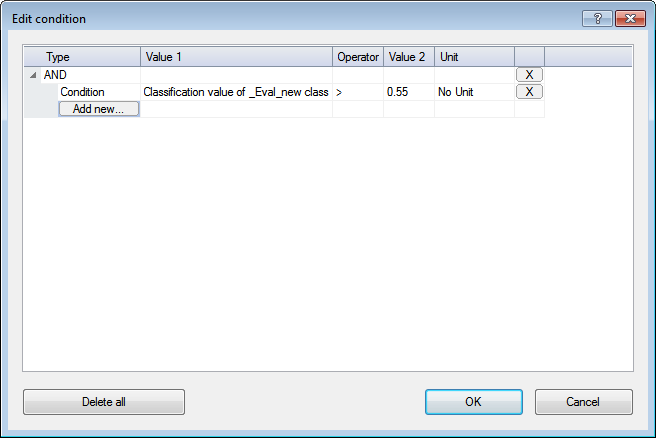

In this example, the rule set developer has specified a threshold of 0.55. Rather than use this value in every rule set item, new processes simply refer to this evaluation class when entering a value for a threshold condition; if developers wish to change this value, they need only change the evaluation class.

TIP: When using this feature with the geometrical mean logical operator, ensure that no classifications return a value of zero, as the multiplication of values will also result in zero. If you want to return values between 0 and 1, use the arithmetic mean operator.

Classification with membership functions is based on user-defined functions of object features, whereas Nearest Neighbor classification uses a set of samples of different classes to assign membership values. The procedure consists of two major steps:

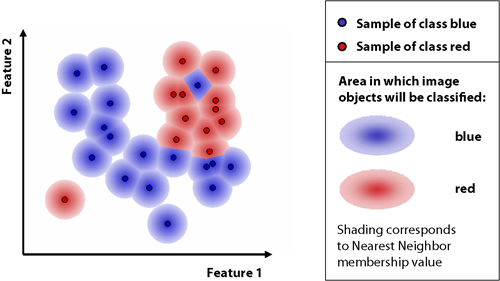

The nearest neighbor classifies image objects in a given feature space and with given samples for the classes of concern. First the software needs samples, typical representatives for each class. After a representative set of sample objects has been declared the algorithm searches for the closest sample object in the defined feature space for each image object. The user can select the features to be considered for the feature space. If an image object's closest sample object belongs to Class A, the object will be assigned to Class A.

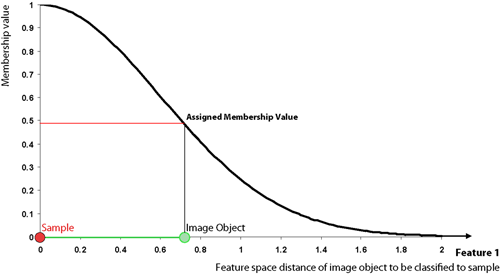

All class assignments in eCognition are determined by assignment values in the range 0 (no assignment) to 1 (full assignment). The closer an image object is located in the feature space to a sample of class A, the higher the membership degree to this class. The membership value has a value of 1 if the image object is identical to a sample. If the image object differs from the sample, the feature space distance has a fuzzy dependency on the feature space distance to the nearest sample of a class (see also Setting the Function Slope and Details on Calculation).

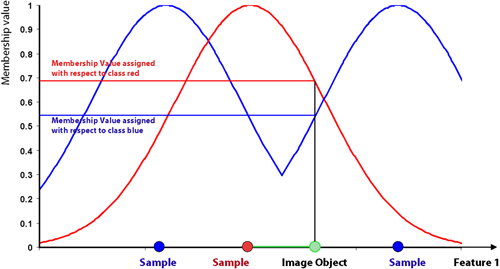

For an image object to be classified, only the nearest sample is used to evaluate its membership value. The effective membership function at each point in the feature space is a combination of fuzzy function over all the samples of that class. When the membership function is described as one-dimensional, this means it is related to one feature.

In higher dimensions, depending on the number of features considered, it is harder to depict the membership functions. However, if you consider two features and two classes only, it might look like the following graph:

eCognition computes the distance d as follows:

|

|

Distance between sample object s and image object o |

|

|

Feature value of sample object for feature f |

|

|

Feature value of image object for feature f |

|

|

Standard deviation of the feature values for feature f |

The distance in the feature space between a sample object and the image object to be classified is standardized by the standard deviation of all feature values. Thus, features of varying range can be combined in the feature space for classification. Due to the standardization, a distance value of d = 1 means that the distance equals the standard deviation of all feature values of the features defining the feature space.

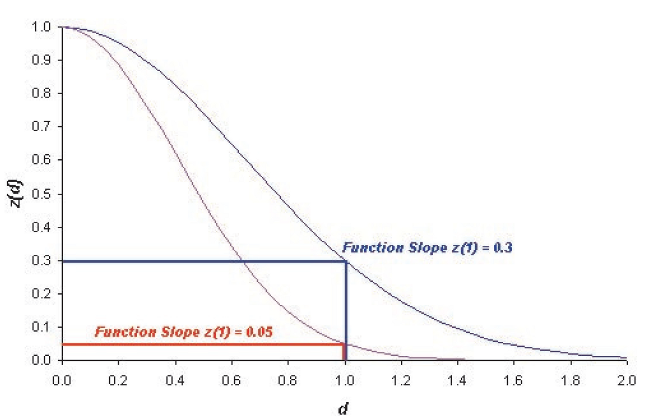

Based on the distance d a multidimensional, exponential membership function z(d) is computed:

The parameter k determines the decrease of z(d). You can define this parameter with the variable function slope:

The default value for the function slope is 0.2. The smaller the parameter function slope, the narrower the membership function. Image objects have to be closer to sample objects in the feature space to be classified. If the membership value is less than the minimum membership value (default setting 0.1), then the image object is not classified. The following figure demonstrates how the exponential function changes with different function slopes.

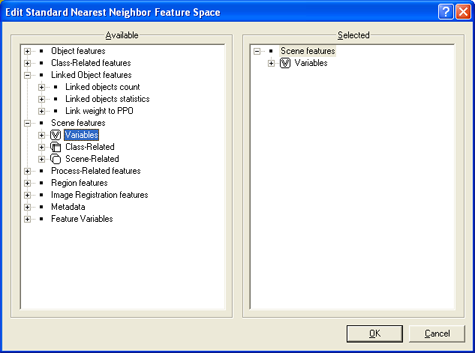

To define feature spaces, Nearest Neighbor (NN) expressions are used and later applied to classes. eCognition Developer distinguishes between two types of nearest neighbor expressions:

The Standard Nearest Neighbor feature space is now defined for the entire project. If you change the feature space in one class description, all classes that contain the Standard Nearest Neighbor expression are affected.

The feature space for both the Nearest Neighbor and the Standard Nearest Neighbor classifier can be edited by double-clicking them in the Class Description dialog box.

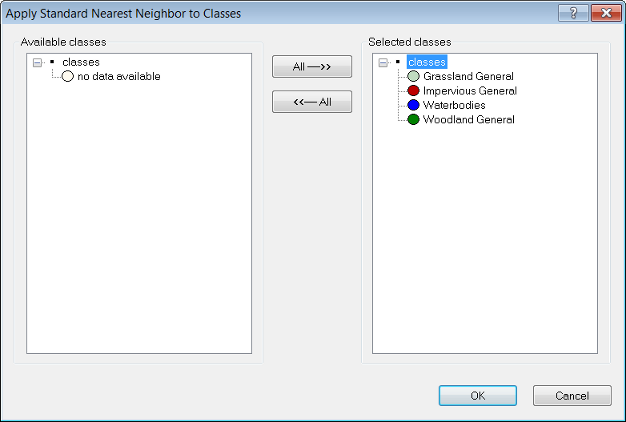

Once the Nearest Neighbor classifier has been assigned to all classes, the next step is to collect samples representative of each one.

Successful Nearest Neighbor classification usually requires several rounds of sample selection and classification. It is most effective to classify a small number of samples and then select samples that have been wrongly classified. Within the feature space, misclassified image objects are usually located near the borders of the general area of this class. Those image objects are the most valuable in accurately describing the feature space region covered by the class. To summarize:

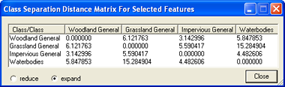

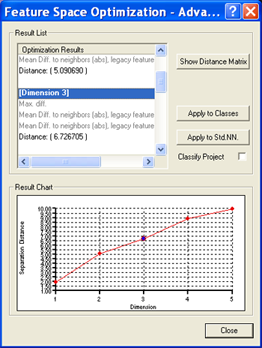

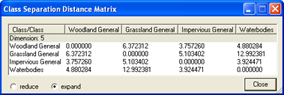

Feature Space Optimization is an instrument to help you find the combination of features most suitable for separating classes, in conjunction with a nearest neighbor classifier.

It compares the features of selected classes to find the combination of features that produces the largest average minimum distance between the samples of the different classes.

The Feature Space Optimization dialog box helps you optimize the feature space of a nearest neighbor expression.

To open the Feature Space Optimization dialog box, choose Tools > Feature Space Optimization or Classification > Nearest Neighbor > Feature Space Optimization from the main menu.

TIP: When you change any setting of features or classes, you must first click Calculate before the matrix reflects these changes.

The Feature Space Optimization `– Advanced Information dialog box provides further information about all feature combinations and the separability of the class samples.

You can automatically apply the results of your Feature Space Optimization efforts to the project.

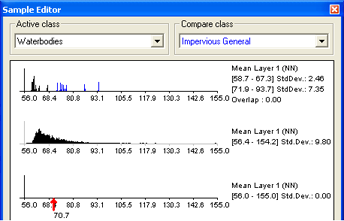

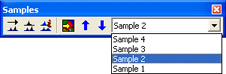

The Sample Editor window is the principal tool for inputting samples. For a selected class, it shows histograms of selected features of samples in the currently active map. The same values can be displayed for all image objects at a certain level or all levels in the image object hierarchy.

You can use the Sample Editor window to compare the attributes or histograms of image objects and samples of different classes. It is helpful to get an overview of the feature distribution of image objects or samples of specific classes. The features of an image object can be compared to the total distribution of this feature over one or all image object levels.

Use this tool to assign samples using a Nearest Neighbor classification or to compare an image object to already existing samples, in order to determine to which class an image object belongs. If you assign samples, features can also be compared to the samples of other classes. Only samples of the currently active map are displayed.

To compare samples or layer histograms of two classes, select the classes or the levels you want to compare in the Active Class and Compare Class lists.

Values of the active class are displayed in black in the diagram, the values of the compared class in blue. The value range and standard deviation of the samples are displayed on the right-hand side.

When you select an image object, the feature value is highlighted with a red pointer. This enables you to compare different objects with regard to their feature values. The following functions help you to work with the Sample Editor:

In addition, the Sample Editor window allows you to generate membership functions. The following options are available:

A Nearest Neighbor classification needs training areas. Therefore, representative samples of image objects need to be collected.

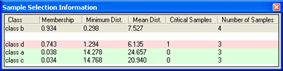

Once a class has at least one sample, the quality of a new sample can be assessed in the Sample Selection Information window. It can help you to decide if an image object contains new information for a class, or if it should belong to another class.

The critical sample membership value can be changed by right-clicking inside the window. Select Modify Critical Sample Membership Overlap from the context menu. The default value is 0.7, which means all membership values higher than 0.7 are critical.

To select which classes are shown, right-click inside the dialog box and choose Select Classes to Display.

To navigate to samples in the map view, select samples in the Sample Editor window to highlight them in the map view.

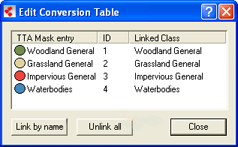

Existing samples can be stored in a file called a training and test area (TTA) mask, which allows you to transfer them to other scenes.

To allow mapping samples to image objects, you can define the degree of overlap that a sample image object must show to be considered within in the training area. The TTA mask also contains information about classes for the map. You can use these classes or add them to your existing class hierarchy.

To load samples from an existing Training and Test Area (TTA) mask:

You can check and edit the linkage between classes of the map and the classes of a Training and Test Area (TTA) mask.

You must edit the conversion table only if you chose to keep your existing class hierarchy and used different names for the classes. A TTA mask has to be loaded and the map must contain classes.

You can use shapefiles to create sample image objects. A shapefile, also called an ESRI shapefile, is a standardized vector file format used to visualize geographic data. You can obtain shapefiles from other geo applications or by exporting them from eCognition maps. A shapefile consists of several individual files such as .shx, .shp and .dbf.

To provide an overview, using a shapefile for sample creation comprises the following steps:

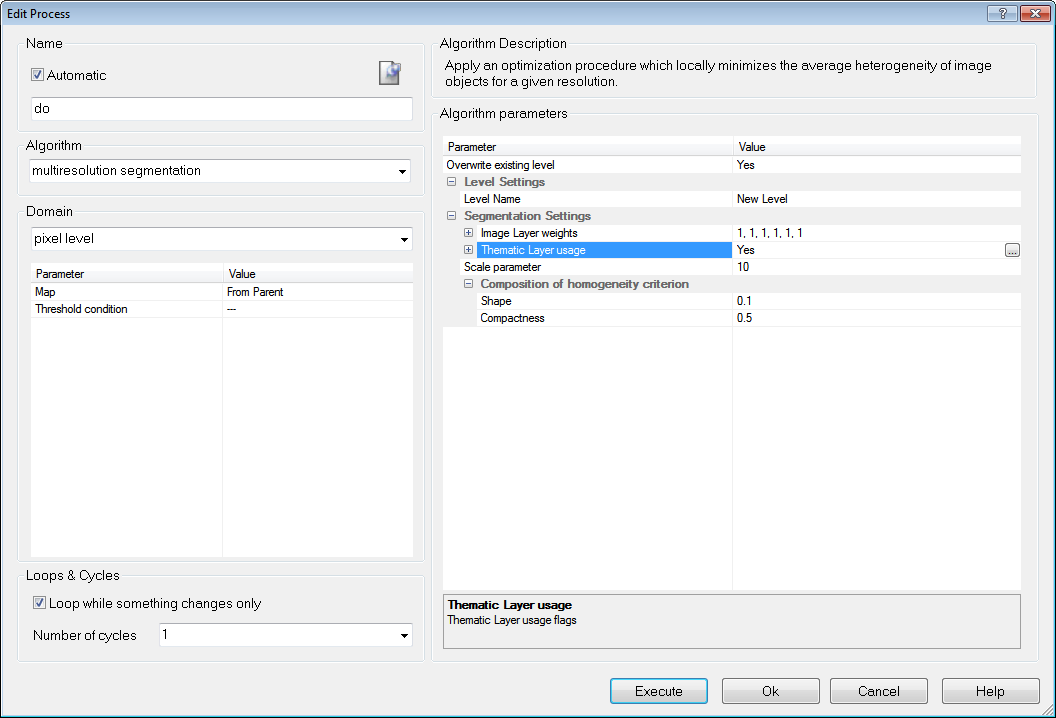

The segmentation finds all objects of the shapefile and converts them to image objects in the thematic layer.

The child process identifies image objects using information from the thematic layer – use the threshold classifier and a feature created from the thematic layer attribute table, for example ‘Image Object ID’ or ‘Class’ from a shapefile ‘Thematic Layer 1’

The Sample Brush is an interactive tool that allows you to use your cursor like a brush, creating samples as you sweep it across the map view. Go to the Sample Editor toolbar (View > Toolbars > Sample Editor) and press the Select Sample button. Right-click on the image in map view and select Sample Brush.

Drag the cursor across the scene to select samples. By default, samples are not reselected if the image objects are already classified but existing samples are replaced if drag over them again. These settings can be changed in the Sample Brush group of the Options dialog box. To deselect samples, press Shift as you drag.

The Sample Brush will select up to one hundred image objects at a time, so you may need to increase magnification if you have a large number of image objects.

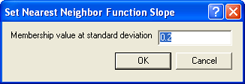

The Nearest Neighbor Function Slope defines the distance an object may have from the nearest sample in the feature space while still being classified. Enter values between 0 and 1. Higher values result in a larger number of classified objects.

To prevent non-deterministic classification results when using class-related features in a nearest neighbor feature space, several constraints have to be mentioned:

The classifier algorithm allows classifying based on different statistical classification algorithms:

The Classifier algorithm can be applied either pixel- or object-based. For an example project containing these classifiers please refer here http://community.ecognition.com/home/CART%20-%20SVM%20Classifier%20Example.zip/view

A Bayes classifier is a simple probabilistic classifier based on applying Bayes’ theorem (from Bayesian statistics) with strong independence assumptions. In simple terms, a Bayes classifier assumes that the presence (or absence) of a particular feature of a class is unrelated to the presence (or absence) of any other feature. For example, a fruit may be considered to be an apple if it is red, round, and about 4” in diameter. Even if these features depend on each other or upon the existence of the other features, a Bayes classifier considers all of these properties to independently contribute to the probability that this fruit is an apple. An advantage of the naive Bayes classifier is that it only requires a small amount of training data to estimate the parameters (means and variances of the variables) necessary for classification. Because independent variables are assumed, only the variances of the variables for each class need to be determined and not the entire covariance matrix.

The k-nearest neighbor algorithm (k-NN) is a method for classifying objects based on closest training examples in the feature space. k-NN is a type of instance-based learning, or lazy learning where the function is only approximated locally and all computation is deferred until classification. The k-nearest neighbor algorithm is amongst the simplest of all machine learning algorithms: an object is classified by a majority vote of its neighbors, with the object being assigned to the class most common amongst its k nearest neighbors (k is a positive integer, typically small). The 5-nearest-neighbor classification rule is to assign to a test sample the majority class label of its 5 nearest training samples. If k = 1, then the object is simply assigned to the class of its nearest neighbor.

This means k is the number of samples to be considered in the neighborhood of an unclassified object/pixel. The best choice of k depends on the data: larger values reduce the effect of noise in the classification, but the class boundaries are less distinct.

eCognition software has the Nearest Neighbor implemented as a classifier that can be applied using the algorithm classifier (KNN with k=1) or using the concept of classification based on the Nearest Neighbor Classification.

A support vector machine (SVM) is a concept in computer science for a set of related supervised learning methods that analyze data and recognize patterns, used for classification and regression analysis. The standard SVM takes a set of input data and predicts, for each given input, which of two possible classes the input is a member of. Given a set of training examples, each marked as belonging to one of two categories, an SVM training algorithm builds a model that assigns new examples into one category or the other. An SVM model is a representation of the examples as points in space, mapped so that the examples of the separate categories are pided by a clear gap that is as wide as possible. New examples are then mapped into that same space and predicted to belong to a category based on which side of the gap they fall on. Support Vector Machines are based on the concept of decision planes defining decision boundaries. A decision plane separates between a set of objects having different class memberships.

There are different kernels that can be used in Support Vector Machines models. Included in eCognition are linear and radial basis function (RBF). The RBF is the most popular choice of kernel types used in Support Vector Machines. Training of the SVM classifier involves the minimization of an error function with C as the capacity constant.

Decision tree learning is a method commonly used in data mining where a series of decisions are made to segment the data into homogeneous subgroups. The model looks like a tree with branches - while the tree can be complex, involving a large number of splits and nodes. The goal is to create a model that predicts the value of a target variable based on several input variables. A tree can be “learned” by splitting the source set into subsets based on an attribute value test. This process is repeated on each derived subset in a recursive manner called recursive partitioning. The recursion is completed when the subset at a node all has the same value of the target variable, or when splitting no longer adds value to the predictions. The purpose of the analyses via tree-building algorithms is to determine a set of if-then logical (split) conditions.

The minimum number of samples that are needed per node are defined by the parameter Min sample count. Finding the right sized tree may require some experience. A tree with too few of splits misses out on improved predictive accuracy, while a tree with too many splits is unnecessarily complicated. Cross validation exists to combat this issue by setting eCognitions parameter Cross validation folds. For a cross-validation the classification tree is computed from the learning sample, and its predictive accuracy is tested by test samples. If the costs for the test sample exceed the costs for the learning sample this indicates poor cross-validation and that a different sized tree might cross-validate better.

The random trees classifier is more a framework that a specific model. It uses an input feature vector and classifies it with every tree in the forest. It results in a class label of the training sample in the terminal node where it ends up. This means the label is assigned that obtained the majority of "votes". Iterating this over all trees results in the random forest prediction. All trees are trained with the same features but on different training sets, which are generated from the original training set. This is done based on the bootstrap procedure: for each training set the same number of vectors as in the original set ( =N ) is selected. The vectors are chosen with replacement which means some vectors will appear more than once and some will be absent. At each node not all variables are used to find the best split but a randomly selected subset of them. For each node a new subset is construced, where its size is fixed for all the nodes and all the trees. It is a training parameter, set to . None of the trees that are built are pruned.

In random trees the error is estimated internally during the training. When the training set for the current tree is drawn by sampling with replacement, some vectors are left out. This data is called out-of-bag data - in short "oob" data. The oob data size is about N/3. The classification error is estimated based on this oob-data.

The classifier algorithm allows a classification based on sample statistics.

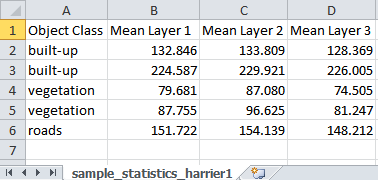

As described in the Reference Book > Advanced Classification Algorithms > Update classifier sample statistics and Export classifier sample statistics you can apply statistics generated with eCognition’s algorithms to classify your imagery.

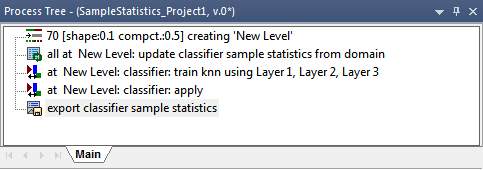

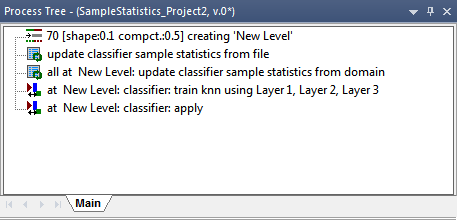

A typical workflow comprises the following steps:

Classes from the sample statistics file are loaded to your project together with the sample statistics information.

You can now add more samples using the manual editing toolbar (same workflow as described in Input of Image Objects for Classifier Sample Statistics). These samples can be added in the following step to the loaded samples of the statistics file.

Insert the process update classifier sample statistics with the settings:

Domain > image object level

Parameter > Level: choose the appropriate level

Execute this process and have a look again at the features:

Note: To reset samples you can execute the process update classifier sample statistics in mode:

Now the image is classified and the described steps can be repeated based on another scene to refine the sample statistics iteratively.

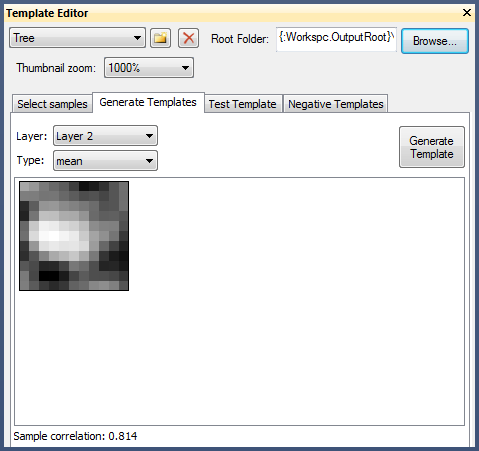

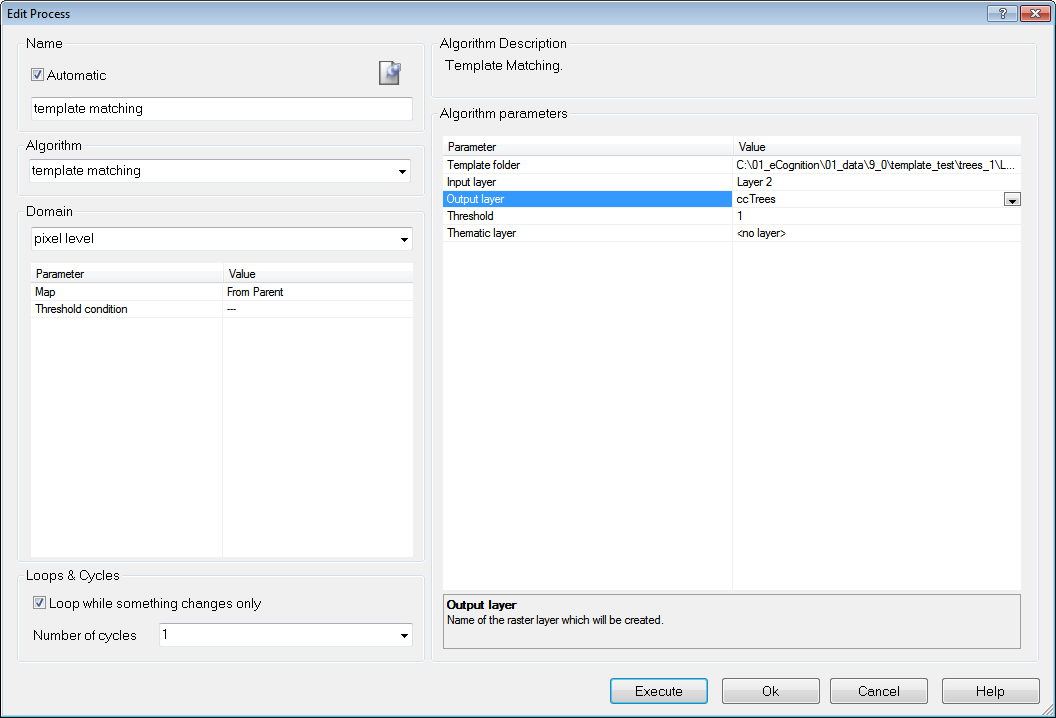

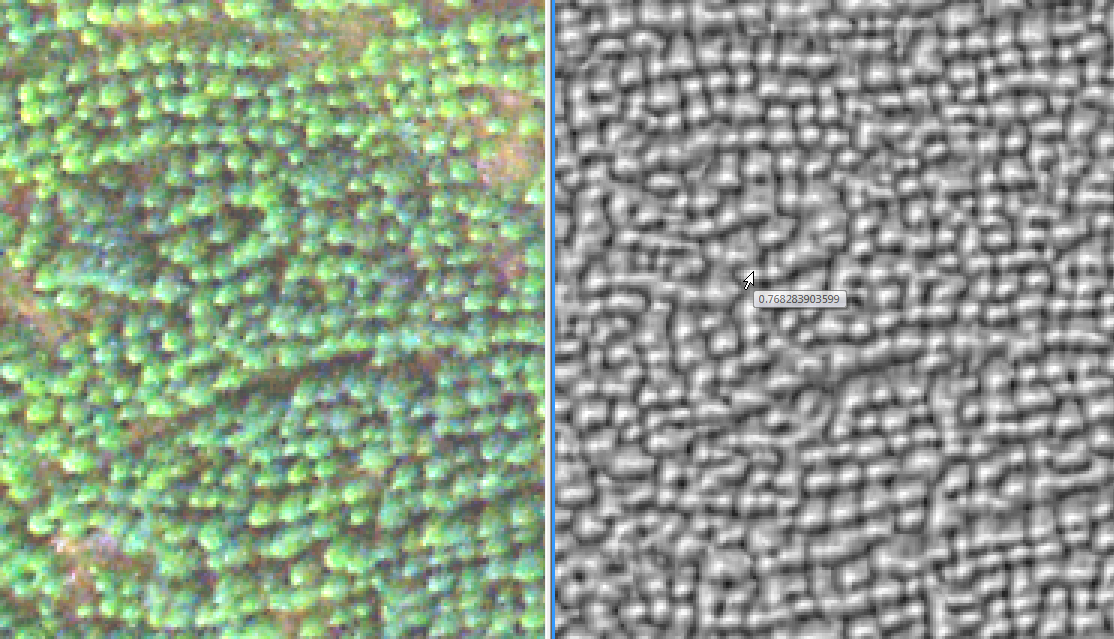

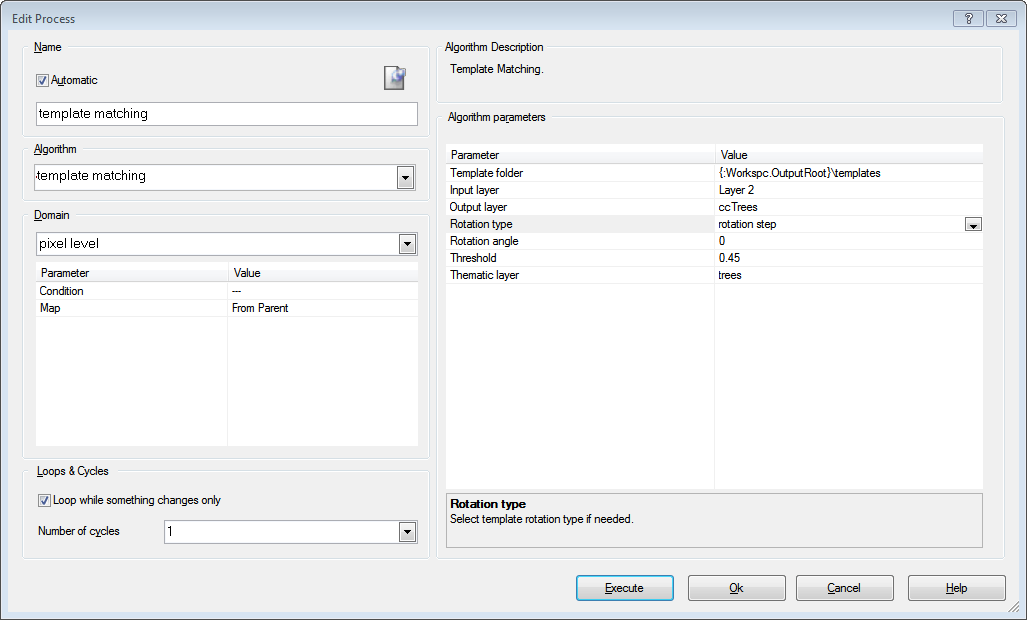

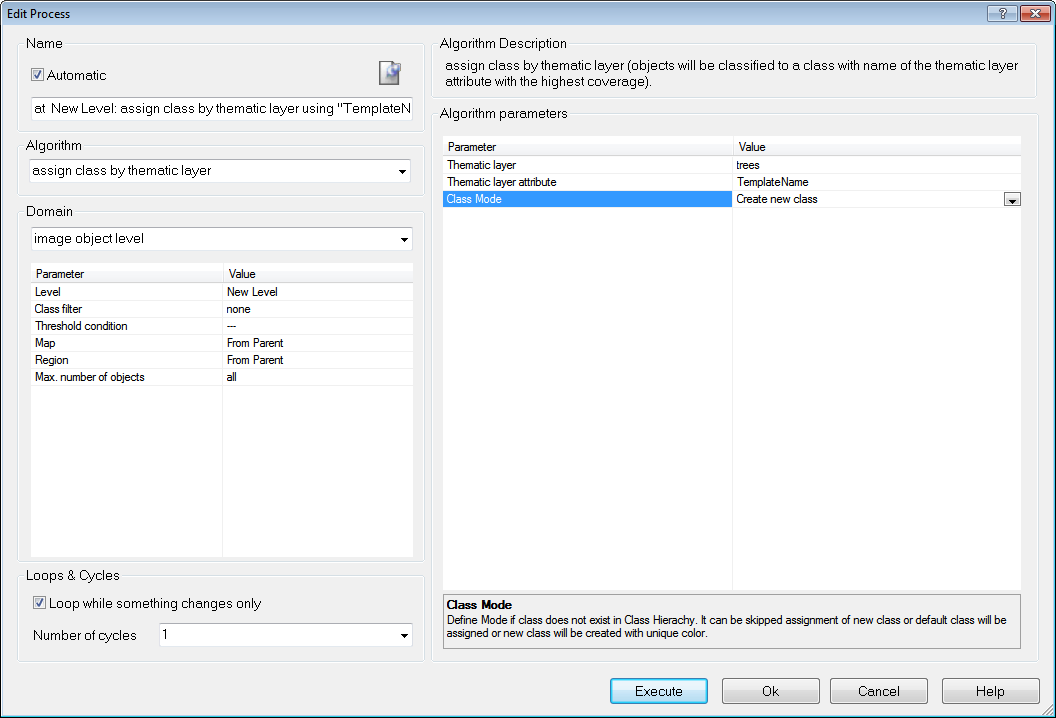

As described in the Reference Book > Template Matching you can apply templates generated with eCognitions Template Matching Editor to your imagery.

Please refer to our template matching videos in the eCognition community http://www.ecognition.com/community covering a variety of application examples and workflows.

The typical workflow comprises two steps. Template generation using the template editor, and template application using the template matching algorithm.

To generate templates:

To apply your templates:

With convolutional neural networks complex problems can be solved and objects in images recognized. This chapter briefly outlines the recommended approach for using convolutional neural networks in eCognition, which is based on deep learning technology from the Google TensorFlow™ library. Please see also Reference Book > Convolutional Neural Network Algorithms and refer to the corresponding Convolutional Neural Networks Tutorial in the eCognition User Community for more detailed explanations.

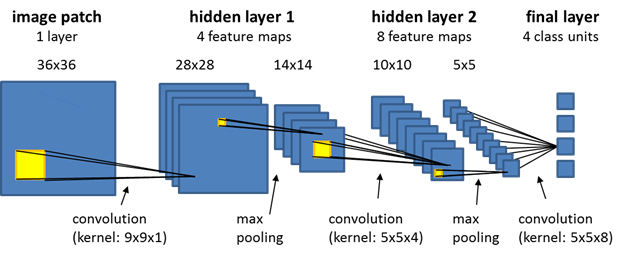

The term convolutional neural networks refers to a class of neural networks with a specific network architecture (see figure below), where each so-called hidden layer typically has two distinct layers: the first stage is the result of a local convolution of the previous layer (the kernel has trainable weights), the second stage is a max-pooling stage, where the number of units is significantly reduced by keeping only the maximum response of several units of the first stage. After several hidden layers, the final layer is normally a fully connected layer. It has a unit for each class that the network predicts, and each of those units receives input from all units of the previous layer.

The workflow for using convolutional neural networks is consistent with other supervised machine learning approaches. First, you need to generate a model and train it using training data. Subsequently, you validate your model on new image data. Finally - when the results of the validation are satisfactory - the model can be used in production mode and applied to new data, for which a ground truth is not available.

We suggest the following steps:

Step 1: Classify your training images based on your ground truth, using standard rule set development strategies. Each classified pixel can potentially serve as a distinct sample. Note that for successful training it is important that you have many samples, and that they reflect the statistics of the underlying population for this class. If your objects of interest are very small, you can classify a region around each object location to obtain more samples. We strongly recommend to take great care at this step. The best network architecture cannot compensate for inadequate sampling.

Step 2: Use the algorithm 'generate labeled sample patches' to generate samples for two or more distinct classes, which you want the network to learn. Note that smaller patches will be processed more quickly by the model, but that patches need to be sufficiently large to make a correct classification feasible, i.e., features critical for identification need to be present.

After you have collected all samples, use the algorithm 'shuffle labeled sample patches' to create a random sample order for training, so that samples are not read in the order in which samples were collected.

Step 3: Define the desired network architecture using the algorithm 'create convolutional neural network'. Start with a simple network and increase complexity (number of hidden layers and feature maps) only if your model is not successful, but be aware that with increasing model complexity it is harder for the training algorithm to find a global optimum and bigger networks do not always give better results.

In principle, the model can already be used immediately after it was created, but as its weights are set to random values, it will not be useful in practice before it has been trained.

Step 4: Use the algorithm train convolutional neural network to feed your samples into the model, and to adjust model weights using backpropagation and statistical gradient descent. Perhaps the most interesting parameter to adjust in this algorithm is the learning rate. It determines by how much weights are adjusted at each training step, and it can play a critical role in whether or not your model learns successfully. We suggest to re-shuffle samples from time to time during training. We also recommend to monitor the current classification quality of your trained model occasionally, using the algorithm 'convolutional neural network accuracy'.

Step 5: Save the network using the algorithm 'save convolutional neural network' before you close your project.

Here we suggest the following steps:

Step 1: Load validation data, which has not been used for training your network. A ground truth needs to be available so that you can evaluate model performance.

Step 2: Load your trained convolutional neural network, using the algorithm 'load convolutional neural network'.

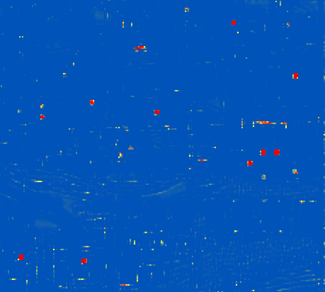

Step 3: Generate heat map layers for your classes of interest by using the algorithm 'apply convolutional neural networks'. Values close to one indicate a high target likelihood, values close to zero indicate a low likelihood.

Step 4: Use the heat map to classify your image, or to detect objects of interest, relying on standard ruleset development strategies.

Step 5: Compare your results to the ground truth, to obtain a measure of accuracy, and thus a quantitative estimate of the performance of your trained convolutional neural network.

Here we suggest the following steps:

Step 1: Load image data that you want to process (a ground truth is not needed anymore at that stage, or course).

Step 2: Load your convolutional neural network, apply it to generate heat maps for classes of interest, and use those heat maps to classify objects of interest (see Steps 2, 3, and 4 in chapter Validate the model).

Learn more:

Convolutional Neural Networks - Deep Learning Tutorial

Convolutional Neural Networks - Deep Learning Algorithms (Reference Book)

Convolutional neural networks - Deep Learning Features (Reference Book)

eCognition tv - Deep Learning webinars and more on our website